AI Solutions

- Network Test

- Interconnect Test

- Compute Test

- Component Test

Explore AI solutions that simplify benchmarking, validate network performance, and optimize data center efficiency. Test lossless Ethernet up to 1.6T and evaluate cluster performance for training and inference using realistic, high-density traffic, workload, and protocol emulation — reducing dependence on GPU-based lab setups. Model AI-specific traffic patterns and workload profiles to understand how network parameters affect performance at both component and system levels.

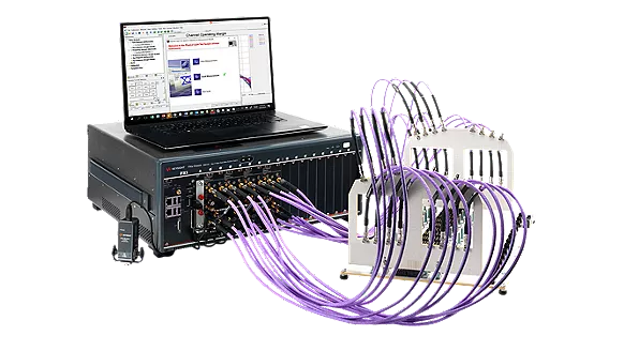

Accelerate development of AI data center interconnects with AI solutions that validate electrical and optical transceivers under real-world performance conditions. Simplify compliance and production testing for 800G and 1.6T systems using high-bandwidth, low-noise instruments and automated workflows that improve throughput and reduce test time. Enable R&D for 3.2T Ethernet and beyond with scalable design and test platforms engineered to support multiple generations of high-speed networking standards.

Advance AI-ready semiconductor and high-speed digital design with AI solutions optimized for AI data center architectures. Debug memory and PCB designs, minimize design spins, and accelerate development with high-accuracy instruments for signal analysis and validation. Automate compliance testing for PCI Express® (PCIe®), double data rate DDR memory, and Compute Express Link (CXL) standards to simplify workflows and ensure reliable, standards-based performance.

Explore AI solutions for AI data center infrastructure built in collaboration with leading network equipment manufacturers. Debug network components, verify compliance, and characterize power integrity in AI data centers using optical and electrical simulation, validation, and test solutions that cover every layer of the network stack. Reduce design risk and setup complexity with integrated tools for design, validation, and automated test — ensuring interoperability and signal integrity under high-speed conditions.

Explore AI Data Center Use Cases

Learn More About AI Solutions

AI Solutions FAQs

An AI solution is more than just a model — it’s an orchestrated system involving data, compute, and operations, optimized for tasks like inference, prediction, and automation. In infrastructure-heavy contexts such as data centers, AI solutions must integrate seamlessly with the compute stack (DDR/HBM memory, PCIe/CXL lanes), interconnects (400G, 800G, 1.6T), and networking protocols (RoCEv2, RDMA). Scalability depends on the ability of these layers to support jitter-free data movement, low latency, and high signal integrity under workload stress.

To function reliably at scale, an AI solution must combine:

- Compute: High-bandwidth memory (HBM/DDR), accelerated with PCIe/CXL interconnects

- Interconnect: 800G/1.6T backbones and 224G SerDes validated for signal quality and modulation

- Network: Low-latency communication with collective bandwidth optimization

- Power: Thermal-aware design and power integrity tools to manage consumption and prevent hotspots

KPIs like jitter, crosstalk, recovery time, algorithm bandwidth, bus bandwidth, and job completion metrics are tracked to ensure sustained performance across environments.

AI solutions differ significantly by industry based on latency tolerance, compute intensity, and data locality. For example:

- Financial services require ultra-low latency and high interconnect integrity (PCIe Gen5 / CXL).

- Healthcare relies on robust memory bandwidth for imaging workloads (DDR / HBM performance).

- Cloud / hyperscale operators prioritize thermal efficiency and job completion time across rack-scale deployments.

These trade-offs must be modeled and benchmarked using tools like workload emulation and simulation.

AI-related benefits include workload automation, reduced operational costs, and smarter system management. Infrastructure-aware AI solutions can dynamically allocate compute, route data efficiently, and anticipate failures based on telemetry.

These challenges include:

- Design validation across memory, interconnect, and power domains

- Maintaining modulation quality at high signaling speeds (224 Gbps, 1.6T)

- Controlling thermal and power integrity under AI load conditions

Without thorough emulation and benchmarking, AI deployments risk failure due to unexpected jitter, latency, or bandwidth bottlenecks.

AI data pipelines must be designed with infrastructure constraints in mind. In high-performance environments:

- Data is pre-processed close to memory (e.g., HBM near-processing)

- PCIe / CXL enables memory pooling and efficient access

- Network configurations allow low-latency transfer across compute nodes

Additionally, telemetry collected during early validation (e.g., from signal integrity tests or workload emulation) helps refine model performance and training strategies.

While our AI data center solutions focus on system-level performance and scalability, Keysight also offers software solutions for validating AI in safety-critical domains such as automotive. With Keysight, you can achieve trustworthy AI deployments by analyzing data sets, validating model behavior, and monitoring AI in deployment.

What are you looking for?